AWS Machine Learning Blog

Tag: Generative AI

Build an enterprise synthetic data strategy using Amazon Bedrock

In this post, we explore how to use Amazon Bedrock for synthetic data generation, considering these challenges alongside the potential benefits to develop effective strategies for various applications across multiple industries, including AI and machine learning (ML).

Shaping the future: OMRON’s data-driven journey with AWS

OMRON Corporation is a leading technology provider in industrial automation, healthcare, and electronic components. In their Shaping the Future 2030 (SF2030) strategic plan, OMRON aims to address diverse social issues, drive sustainable business growth, transform business models and capabilities, and accelerate digital transformation. At the heart of this transformation is the OMRON Data & Analytics Platform (ODAP), an innovative initiative designed to revolutionize how the company harnesses its data assets. This post explores how OMRON Europe is using Amazon Web Services (AWS) to build its advanced ODAP and its progress toward harnessing the power of generative AI.

Generate training data and cost-effectively train categorical models with Amazon Bedrock

In this post, we explore how you can use Amazon Bedrock to generate high-quality categorical ground truth data, which is crucial for training machine learning (ML) models in a cost-sensitive environment. Generative AI solutions can play an invaluable role during the model development phase by simplifying training and test data creation for multiclass classification supervised learning use cases. We dive deep into this process on how to use XML tags to structure the prompt and guide Amazon Bedrock in generating a balanced label dataset with high accuracy. We also showcase a real-world example for predicting the root cause category for support cases. This use case, solvable through ML, can enable support teams to better understand customer needs and optimize response strategies.

Build a generative AI enabled virtual IT troubleshooting assistant using Amazon Q Business

Discover how to build a GenAI powered virtual IT troubleshooting assistant using Amazon Q Business. This innovative solution integrates with popular ITSM tools like ServiceNow, Atlassian Jira, and Confluence to streamline information retrieval and enhance collaboration across your organization. By harnessing the power of generative AI, this assistant can significantly boost operational efficiency and provide 24/7 support tailored to individual needs. Learn how to set up, configure, and leverage this solution to transform your enterprise information management.

Integrate generative AI capabilities into Microsoft Office using Amazon Bedrock

In this blog post, we showcase a powerful solution that seamlessly integrates AWS generative AI capabilities in the form of large language models (LLMs) based on Amazon Bedrock into the Office experience. By harnessing the latest advancements in generative AI, we empower employees to unlock new levels of efficiency and creativity within the tools they already use every day.

Revolutionizing clinical trials with the power of voice and AI

As the healthcare industry continues to embrace digital transformation, solutions that combine advanced technologies like audio-to-text translation and LLMs will become increasingly valuable in addressing key challenges, such as patient education, engagement, and empowerment. In this post, we discuss possible use cases for combining speech recognition technology with LLMs, and how the solution can revolutionize clinical trials.

Intelligent healthcare assistants: Empowering stakeholders with personalized support and data-driven insights

Healthcare decisions often require integrating information from multiple sources, such as medical literature, clinical databases, and patient records. LLMs lack the ability to seamlessly access and synthesize data from these diverse and distributed sources. This limits their potential to provide comprehensive and well-informed insights for healthcare applications. In this blog post, we will explore how Mistral LLM on Amazon Bedrock can address these challenges and enable the development of intelligent healthcare agents with LLM function calling capabilities, while maintaining robust data security and privacy through Amazon Bedrock Guardrails.

Benchmarking customized models on Amazon Bedrock using LLMPerf and LiteLLM

This post begins a blog series exploring DeepSeek and open FMs on Amazon Bedrock Custom Model Import. It covers the process of performance benchmarking of custom models in Amazon Bedrock using popular open source tools: LLMPerf and LiteLLM. It includes a notebook that includes step-by-step instructions to deploy a DeepSeek-R1-Distill-Llama-8B model, but the same steps apply for any other model supported by Amazon Bedrock Custom Model Import.

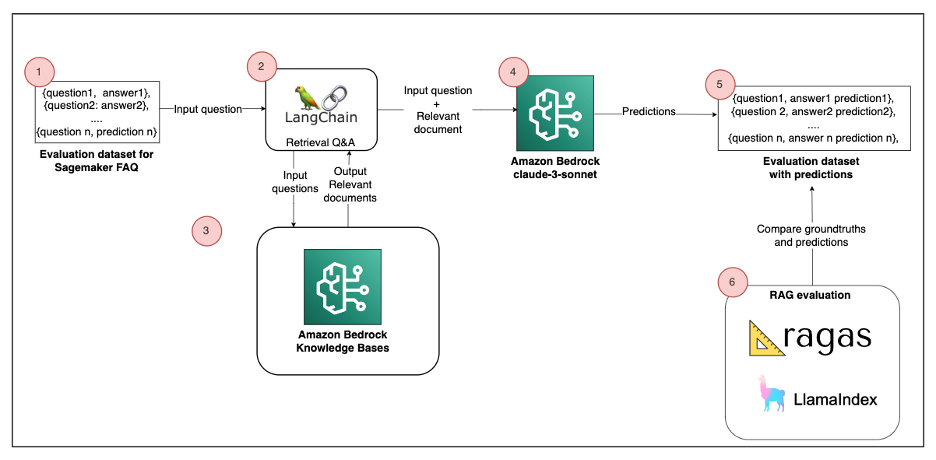

Evaluate RAG responses with Amazon Bedrock, LlamaIndex and RAGAS

In this post, we’ll explore how to leverage Amazon Bedrock, LlamaIndex, and RAGAS to enhance your RAG implementations. You’ll learn practical techniques to evaluate and optimize your AI systems, enabling more accurate, context-aware responses that align with your organization’s specific needs.

Accelerate AWS Well-Architected reviews with Generative AI

In this post, we explore a generative AI solution leveraging Amazon Bedrock to streamline the WAFR process. We demonstrate how to harness the power of LLMs to build an intelligent, scalable system that analyzes architecture documents and generates insightful recommendations based on AWS Well-Architected best practices. This solution automates portions of the WAFR report creation, helping solutions architects improve the efficiency and thoroughness of architectural assessments while supporting their decision-making process.